We do a lot of Illumina-based metabarcode sequencing here at the BioBE center. Sequencing is getting cheaper, and the amount of data you can get from a sequencing run continues to increase, but not at the same rate: it is now becoming more and more common to sequence samples across multiple sequencing runs, because a single run does not provide the necessary sequencing depth.

The field, as a whole, is still trying to work out how combine samples from different sequencing runs: because the error rates and read distributions tend to be specific to a given sequencing run, it can be difficult to distinguish between run effects and biological effects.

We’ve recently run across an interesting case, while working to improve our bioinformatics pipelines.

It is common for sequencing facilities to spike in Phi-X DNA to add heterogeneity to the library being sequenced; this heterogeneity prevents synchronous fluorescence from any given base overwhelming the sensor (Phi-X reads are removed bioinformatically, generally by the sequencing facility). There is, however, a more sequencing-efficient way to introduce heterogeneity into your library: variable length spaces between the Illumina adapter and the target sequence. This method doesn’t “waste” sequencing on Phi-X, but still handily prevents synchronous fluorescence. The problem is, sometimes those spaces may not be fully removed before data processing.

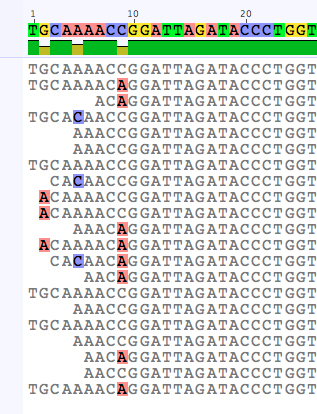

In collaborating with colleagues to test various options for merging data from distinct sequencing runs, we were working with some problematic data that included samples re-sequenced in two different Illumina MiSeq runs. We discovered that they had such heterogeneity spaces that had not been removed by the sequencing facility. This didn’t matter at all when processing with QIIME and uclust, because the 97% OTU radius was enough to “lump” all of the spacer sequences into the same OTU, but when working with denoising tools that infer exact sequence variants (ESVs), like DADA2, it altered the ability to recognize that the dominant sequences from the same sample in the two different runs were the same.

There are several potential solutions to this problem, but the best one is to always make sure you understand your data fully, and remove any potential sources of artificial variation before inferring sequence variants or picking OTUs. Usually, that means searching for and removing the PCR primers from each sequence, along with any sequence behind them — there are many programs out there with this functionality, including the FastX toolkit, trimmomatic, and cutadapt. If you’re sequencing a variable length region, like the internal transcribed spacer (ITS) of the ribosomal DNA, this also has the benefit of removing artificial variation introduced by sequencing past the primer on the other side of the short amplicons.

Another potential solution if you’re using DADA2 is to use “100%” OTU clustering (that is collapsing all sequences that differ only be length into the same inferred variant). There is, conveniently, an option baked into dada() for that: collapseNoMismatch = TRUE. The DADA2 pipeline also did a much better job of recognizing that different sequences with artificial variation were actually the same when using pool = TRUE, although pooling all samples for sequence inference is likely too computationally intensive to be a viable solution.

Additionally, Paul McMurdie points out that we can look for irregularities early on with DADA2:

Another way to note this early in your process is to check that the error rates look reasonable for your platform/amplicon, e.g. if you had previous successful runs for that amplicon and seq platform, you could check that the error profiles are not wildly different. If they are, you usually have a problem with trimming.

I am, however, not able to see a clear signal of the heterogeneity spacers in the error profiles for this data. It may vary with the length and variation within the spacers — I’ll surely be adding an error profile check to my standard workflow, though.

After removing the primers and spaces, we get much better agreement between sequencing runs (although we still get 45–65% of ESVs in only one run or the other). We’re still investigating this particular issue: you can follow the ongoing discussion (and contribute!) on the DADA2 GitHub page.